By default on an Oracle RAC installation, the listeners are configured to allow any database to register with them. There is no security out of the box to determine which databases may register. While this makes it easy to create new databases without worrying about listener registration, this can cause potential problems in a real environment.

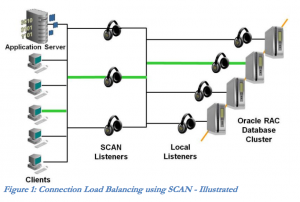

This can be dangerous working with RAC environments where the database registers with both a local and remote listener. The local listener is just that - the listener that accepts communications for instances running on that node. The remote listener is generally associated with the Single Client Access Name, or SCAN, feature. SCAN allows databases in a cluster to register with a highly available set of listeners that act as the load balancers for incoming sessions. Database clients connect to a SCAN listener and request access to a SERVICE_NAME, and are then given a local listener to connect to. Oracle's whitepaper on SCAN (http://www.oracle.com/technetwork/products/clustering/overview/scan-129069.pdf) has a good diagram of how this looks:

SCAN listeners are designed to accept registrations from database instances, no matter where the registration is coming from. For example, imagine an 8-node Oracle RAC system with dozens of 2-instance databases spread throughout. Thanks to the LOCAL_LISTENER parameter setting, the instances will register with the listener on the node where they reside. If the REMOTE_LISTENER parameter is set to the SCAN hostname and port, they will also register with the SCAN listeners throughout the cluster. These registrations will occur no matter which node the SCAN listeners reside on - they are configured to accept registrations from anywhere. More on this problem in a moment.

Whether deliberately malicious or unanticipated, listener poisoning can have detrimental effects that can sometimes be very difficult to diagnose. In some cases, a database on a remote cluster will register with a different cluster, causing connectivity issues. Worse, a database with the same name could cause production traffic to go to the same service on another cluster entirely, causing unexpected issues.

Initially, the solution to this problem was documented in MOS note #1340831.1, which required configuring a wallet for the listener, and secure TCP communication between database instances and the listener. Thankfully, Oracle now supports Valid Node Checking for Registration, or VNCR (MOS Note #1914282.1), in versions 11.2.0.4 and up. Setting this up requires a few parameters in the listener.ora file and bouncing the listeners.

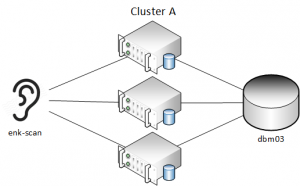

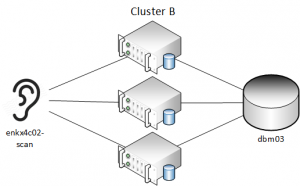

Here's an example of what this looks like in practice. We have 2 RAC clusters, each with their own SCAN hostnames - Cluster A (enk-scan) and Cluster B (enkx4c02-scan). Each cluster has a database named "dbm03" - they are both primary databases that have different data.

Each database has a table (T) in the ACOLVIN schema with one record that shows which cluster it belongs to:

$ cat acolvin.sql

show parameter remote_listener

select name from v$database;

select * from t;

exit;

$ sqlplus -S acolvin/****@enk-scan.enkitec.local:1521/dbm03 @acolvin.sql

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

remote_listener string enk-scan:1521

NAME

-------

DBM03

COL1

--------------------

THIS IS CLUSTER A

$ sqlplus -S acolvin/****@enkx4c02-scan.enkitec.local:1521/dbm03 @acolvin.sql

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

remote_listener string enkx4c02-scan:1521

NAME

-------

DBM03

COL1

--------------------

THIS IS CLUSTER B

As you can see, both databases have the same name, but different data in T. Now, what happens to the database in cluster A if we change the remote listener parameter?

$ sqlplus / as sysdba

SQL*Plus: Release 11.2.0.4.0 Production on Mon Feb 22 18:36:00 2016

Copyright (c) 1982, 2013, Oracle. All rights reserved.

Connected to:

Oracle Database 11g Enterprise Edition Release 11.2.0.4.0 - 64bit Production

With the Partitioning, Real Application Clusters, Automatic Storage Management, OLAP,

Data Mining and Real Application Testing options

SYS:dbm031> alter system set remote_listener='enkx4c02-scan.enkitec.local:1521';

System altered.

SYS:dbm031> alter system register;

System altered.

SYS:dbm031> exit;

$ sqlplus -S acolvin/welcome1@enkx4c02-scan.enkitec.local:1521/dbm03 @acolvin.sql

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

remote_listener string enkx4c02-scan.enkitec.local:1521

NAME

-------

DBM03

COL1

--------------------

THIS IS CLUSTER A

Even though the dbm03 database is up and running on Cluster B, new sessions to the dbm03 database that go to enkx4c02-scan go to the database on Cluster A. This is definitely not what we would want to happen.

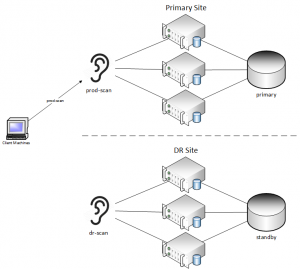

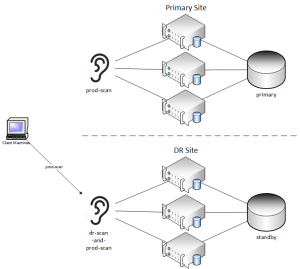

You may be saying - "well, that doesn't apply to us - we wouldn't do something that crazy." Keep in mind that this can happen inadvertently. Take an example where there are primary and standby systems, and many different applications. In order to avoid changing applications in the event of a DR event, the DNS records for the SCAN are changed. We see this frequently on customer systems in order to make the failover process very simple. In order to keep the applications available, some clients may choose to switch over to DR while performing maintenance at the primary site. The setups are slightly different between normal operations and DR runtime:

As mentioned before, DNS records are changed during a DR event. By only changing DNS entries, the applications do not need any changes whatsoever. This can lead to easy switchover operations, as DNS changes can be automated very easily. As you can see below, client machines will be directed to the DR site, but the primary site will still utilize the prod-scan hostname as well.

This will work without an issue if there is an actual failure on the primary site, but what if databases come online at the primary site, and still attempt to register their service names with the remote listener? DNS will resolve the REMOTE_LISTENER parameter to the DR site and perform registration. The client machines will be accidentally directed back to the primary site, which may have databases only running in mount mode.

The solution to this is to configure VNCR, which was mentioned above. VNCR is set up by adding a few parameters to the listener.ora file on each node in the cluster. For an 11.2.0.4 installation, the following parameters need to be added on each host:

VALID_NODE_CHECKING_REGISTRATION_

VALID_NODE_CHECKING_REGISTRATION_LISTENER_SCAN1

REGISTRATION_INVITED_NODES_LISTENER_SCAN1

VALID_NODE_CHECKING_REGISTRATION_LISTENER_SCAN2

REGISTRATION_INVITED_NODES_LISTENER_SCAN2

VALID_NODE_CHECKING_REGISTRATION_LISTENER_SCAN3

REGISTRATION_INVITED_NODES_LISTENER_SCAN3

The VALID_NODE_CHECKING_REGISTRATION parameter has 3 values

- OFF - there are no restrictions on listener registrations

- LOCAL - listener registrations only allowed on the local host

- SUBNET - listener registrations are allowed from any host on the subnet

The REGISTRATION_INVITED_NODES parameter specifies hosts that are allowed to make registrations to the specified listener. Generally, it is a good idea to set the VALID_NODE_CHECKING_REGISTRATION parameter to local. The SCAN listeners will need to accept registrations from all nodes in the cluster, so specify the IP addresses of the RAC nodes in a comma-separated list. On the Exadata in our lab, public IP addresses are 10.239.4.7 and 10.239.4.9. In that case, the parameters are set with:

VALID_NODE_CHECKING_REGISTRATION_LISTENER=LOCAL

VALID_NODE_CHECKING_REGISTRATION_LISTENER_SCAN1=LOCAL

REGISTRATION_INVITED_NODES_LISTENER_SCAN1=(10.239.4.7,10.239.4.9)

VALID_NODE_CHECKING_REGISTRATION_LISTENER_SCAN2=LOCAL

REGISTRATION_INVITED_NODES_LISTENER_SCAN2=(10.239.4.7,10.239.4.9)

VALID_NODE_CHECKING_REGISTRATION_LISTENER_SCAN3=LOCAL

REGISTRATION_INVITED_NODES_LISTENER_SCAN3=(10.239.4.7,10.239.4.9)

After setting these values and restarting all listeners (including SCAN listeners), remote databases can no longer register with the listener. From the listener.log for a SCAN listener, we see the following entry when an instance tries to register with a remote listener it doesn't have permission to register with (192.168.101.201 is the public IP of one of the other cluster nodes):

Listener(VNCR option 1) rejected Registration request from destination 192.168.101.201

22-FEB-2016 19:50:12 * service_register_NSGR * 1182

TNS-01182: Listener rejected registration of service ""

By setting this up, you are able to ensure that there won't be any intentional or unintentional listener poisoning going forward.