Last week’s Oracle Open World conference included many different announcements – one that impacts my work was the release of a new generation of Exadata machines – this time, the X7-2. If you’ve followed Exadata development over the years, there has been a typical set of features that come in with each release:

- Increase in CPU core count

- Increase in disk storage (typically 2x)

- Increase in flash storage (typically 2x)

- New software features

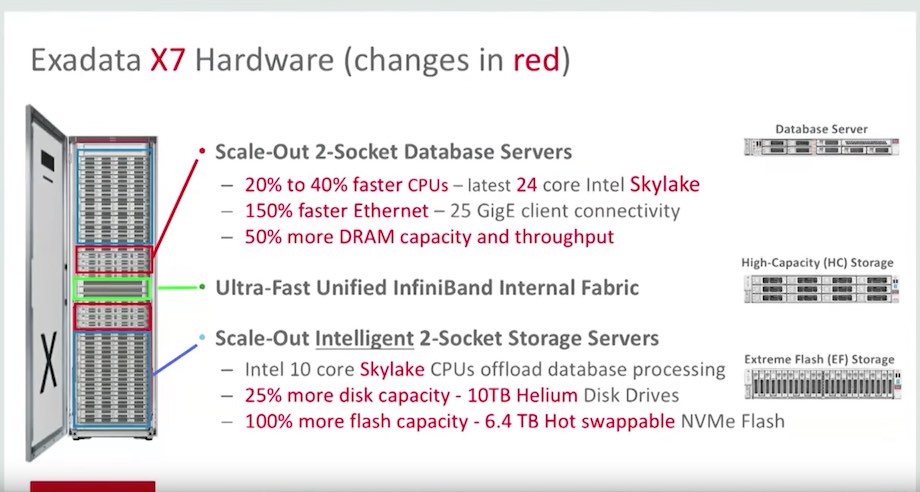

The screenshot below shows some of the hardware changes (via youtube):

What jumps out from this slide is that rather than pack a larger number of slow cores in to the database servers, we’re getting a small bump (22 to 24 cores per socket) with *faster* CPUs. While not the best comparison, SPECint ratings for the Xeon 8160 (X7-2 CPU) vs the Xeon 2699 v4 (X6-2 CPU) shows about a 25% improvement. Also, the base amount of RAM in compute nodes is increasing from 256GB to 384GB. This is due to the Skylake architecture including 6 memory channels rather than the 4 channels that were utilized in previous generations. Overall, memory still maxes out at 1.5TB per compute node. The compute nodes also get an upgrade to the PCIe Ethernet cards with a 10/25GbE option. I haven’t seen many customers begging for 25GbE connectivity to their machines, but it will be a welcome addition for some. I imagine that these ports are still pretty expensive from a switch perspective. Back when we were implementing X2-2 racks, there were plenty of customers that balked at giving up 16 of these ports for a database machine. I’d expect it’s the same case nowadays with the 25GbE ports – in the next couple of years, we’ll see everything move in this direction. It’ll become even more important as databases are consolidated on Exadata, and different network types are trunked together via VLAN tagging.

The storage servers see a few pretty big bumps:

- Disk drives upgraded to 10TB each

- Flash storage doubles (again!) to 25.6TB per cell

- Flash disks are now hot-swappable

- Operating system storage moved out to separate, internal devices

- Memory upgrades up to 1.5TB per storage server

- “Do not service” LED light on server front panel

The disk and flash capacity changes aren’t really anything new, and were to be expected. Seeing the flash disks become hot swappable is very cool. There has always been some pain involved in replacing a failed flash card – ASM disks have to be taken offline, and then they aren’t usable until the disks have caught up on missed writes while they were down. Now, failed flash disks will have a status of “failed – dropped for replacement.” Once they’re in that state, they can be removed and replaced while the storage server stays online. This prevents the need for an ASM disk resync altogether.

Another feature that has been a long time coming is the removal of the operating system partitions from the disks that are used by cellsrv. Oracle now includes two M.2 disks for the Linux OS. Why is this a big deal? By moving the OS over to these separate devices, we no longer have to worry about the first 2 disks containing the OS. That small ~30GB slice on each of those disks is what led to the creation of the +DBFS_DG diskgroup. Now, all hard disks (or flash disks for you EF users) are fully allocated to cellsrv. Since all of the celldisks will be the same size, you only need to create +DATA and +RECO going forward. This is one of my favorite changes, even though it’s a small one.

One of the software features that received some attention was the possibility of using RAM on the storage servers as an extension of the buffer cache. This was pretty intriguing to me, as it’s a storage area that sits between the buffer cache on database servers and flash cache on the storage. From what I have read, users can configure a “RAM cache” on the Exadata storage servers beginning with image version 18.1. Thanks to InfiniBand, database servers can pull memory from this cache with very low latency via RDMA. This memory area will be used to hold memory segments as they are aged out of the database buffer cache. Since Oracle owns the entire stack, the database and storage servers coordinate where blocks will be held. If a block is pulled from the RAM cache to the database buffer cache, it is removed from the RAM cache entirely. This prevents blocks from being stored in 2 different tiers of memory.

I upgraded the Enkitec X4-2 Exadata rack to image version 18.1 this week, and saw the ramCache attribute on there:

[root@enkx4cel07 ~]# cellcli -e list cell detail | egrep 'ram|releaseVersion|rpmVersion'

ramCacheMaxSize: 0

ramCacheMode: Auto

ramCacheSize: 0

releaseVersion: 18.1.0.0.0.170915.1

rpmVersion: cell-18.1.0.0.0_LINUX.X64_170915.1-1.x86_64

Sadly, the RAM cache feature is only available for X6-2 and X7-2 storage servers. I tested it out in hopes that the documentation wasn’t right, but didn’t have any luck on our X4. I hope to get my hands on a supported model shortly so that I can test this feature out.

You can read about the new features of Exadata storage server version 18.1 here, or see the datasheet for the Exadata X7-2 here.

Yup, many improvements over x6, but InfiniBand speed is still outdated (Oracle marketing claims it is “Ultra Fast”), although 56 Gbps has been available for many many years.

Greg,

That was one of the first things that I thought of – I was surprised to see Ethernet throughput increase before InfiniBand. Knowing that FDR and EDR connectivity has been out there for a while, I would have expected to see new equipment on that front. We may just have to wait for Oracle to start selling FDR/EDR InfiniBand switches.

Thanks,

Andy

Hi, Andy

Interesting post, thank you !

Do you know anything about Intel 3D XPoint in the Exadata X7-2 ?

What is its role ? What it is for ?

Regards,

Yury

Yury,

My understanding is that this is still “on the roadmap” – not going to be available in the X7-2.

Thanks,

Andy

Andy/Yuri, it is my understanding that the ram cache is actually an architectural change that was sparked by 3d xpoint, Juan Loaiza is mentioning that in this video: https://youtu.be/A-EibGxuDag at around 11:52.

Than you, gentlemen 🙂

One more question: i read the

http://www.oracle.com/technetwork/database/exadata/exadata-x7-2-ds-3908482.pdf

and look for 1/8 configuration.

And I see “Eighth Rack (X6-2)” .

As i understand the 1/8 X7-2 is fact 1/4 X6-2 !

Can anybody say anything about X7-2 Eighth Rack ?

Thank you !

Yury