As business has picked up since OpenWorld (didn't think that was possible, but that's another story for another day), we have been seeing more customers adopt or seriously look at Exadata as an option for new hardware implementations. While many will complain that there isn't enough room for customization in the rigid process of configuring an Exadata system, there are still many possibilities to make your Exadata your own, whether it's during the initial configuration phase or shortly thereafter. Of course, some of these modifications can be difficult to implement after the system is up and running with users logging in. I'm planning on starting a series of posts regarding a couple of the hot-button topics with regard to Exadata configuration - ASM diskgroup layout (the topic for today), role separated vs standard authentication, and so on. As these topics have no right answers, I'm more than open to a dialogue where you may disagree. On to the good stuff!

A Quick Primer - The Exadata Storage Architecture

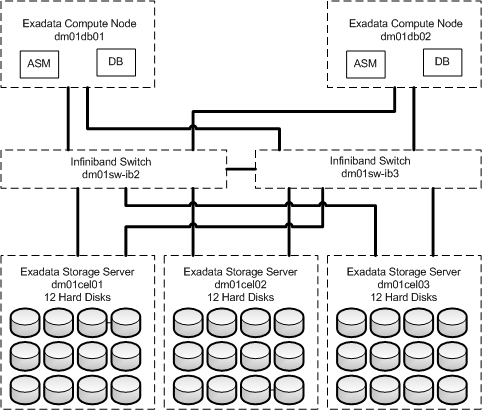

Ok...so we're looking at Exadata specifically in this post. In the examples listed below, we'll discuss a quarter rack, since it's the easiest to diagram. To expand to half or full racks, just adjust the number of cells (7, 14) and disks (84, 168) accordingly. To see the relationship between the compute nodes (database servers), Infiniband switches, and storage servers refer to figure 1:

Figure 1 - Exadata Infiniband/Storage Connectivity

As you can see, each storage server has 12 physical disks that are carved up for the use of ASM. Figure 2 shows the relationship between physicaldisks, celldisks, and griddisks. What we're really interested in are the griddisks.

Figure 2 - Exadata Disk Relationship (Oracle's pretty diagram)

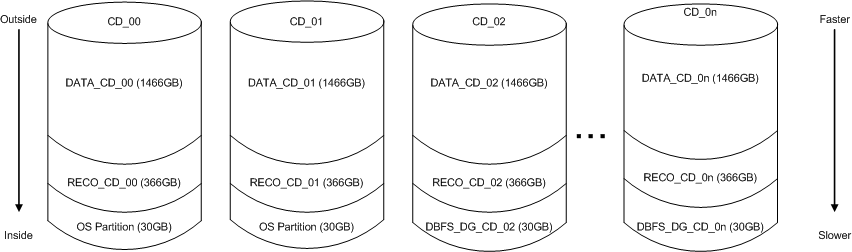

To expand upon the last piece of the diagram, we have a representation of what the celldisks look like when carved up according to the default installation on Exadata. This shows the first 3 disks, followed by disk "n" which represents the remaining disks.

Figure 3 - Default Exadata Griddisk Layout (My ugly diagram)

Figure 3 - Default Exadata Griddisk Layout (My ugly diagram)

On each cell, the first 2 disks have approximately 30GB carved off for the operating system. This space is used on the remaining disks to create the DBFS_DG (formerly SYSTEMDG) griddisks to level out the remaining space. After that, a griddisk for DATA is created (starting from the outside tracks for the best performance), followed by the RECO griddisk. These griddisks are what translate to disks in the ASM instances.

What's Different with Exadata?

On a traditional SAN, the typical approach is to create multiple diskgroups, and allocate a LUN for each diskgroup. Each LUN would be made up of different disks. Whether the decision to create multiple diskgroups is based on separating entire databases from each other or even ensuring that indexes and tables do not share spindles, it is up to the DBAs and storage administrators to design a storage strategy that best fits the specific environment. Using this strategy, the I/O on one diskgroup would not affect the others. Once again, all of this changes with Exadata.

As mentioned earlier, the default configuration on Exadata is to have 3 diskgroups - DATA, RECO, and DBFS_DG. The diskgroups span all of the disks in the rack, and each physical disk is used by each of the diskgroups. This is done in order to maximize performance by using as many spindles as you can. Whether you have 1 diskgroup or 5 diskgroups on Exadata, they will still be sharing the same physical disks. While it is possible to only use certain disks (0-5) for one disk group and others (6-11) for another diskgroup, this will result in a degradation of performance, due to only working on half of the available disks.

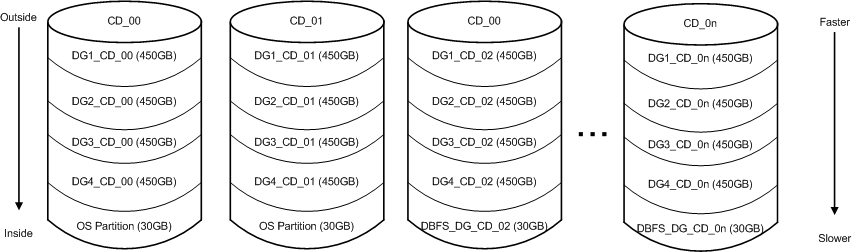

The other option for multiple diskgroups is to drop the default griddisks and create new ones based on a new layout of diskgroups. The original diskgroups and griddisks (DATA, RECO) would be dropped, and new diskgroups would be created. In the example below, I use DG1, DG2, DG3, DG4, and keep the original DBFS_DG. As you can see, objects in DG1 should see better performance than DG4, due to the location on the disk. The biggest issue that I see is the lack of flexibility after creating a layout like this. It is my preference to spend as much time as possible with customers before building out the Exadata databases to find out how things should be laid out, especially the storage.

Figure 4 - Multiple Exadata Diskgroups Layout

One of the things that I have learned from the various Exadata projects that I have been on is that once "the business" begins to see the performance gains from the original system that the Exadata was purchased for, other databases are sure to follow. While you may have originally purchased that half rack for a Peoplesoft deployment, the BI folks start thinking about what Exadata can do for them, the data warehouse people begin to lobby to place their databases on the system, and there are twice as many databases running as what was originally planned. What happens when you created diskgroups based on the 5 different Peoplesoft (not that there would only be 5 PSFT databases) or EBS databases that were originally envisioned to run on that Exadata? With the storage segmented, it's hard to fit the new databases around the space left through the various diskgroups. It's not as simple as adding a new LUN (short of purchasing more storage servers, although I'd always be up for that) for the reasons listed above.

Say your diskgroups originally followed a naming strategy where they were named after the database that resided in them. What happens if you needed to add another database in there? While it is possible to rename a diskgroup (see metalink note #948040.1), you would end up with the very sticky situation where the ABC griddisks belonged to the XYZ diskgroups. Not something that I would want to have to remember.

Where Does This Leave Us?

This begs the question - what is gained by creating multiple diskgroups? While I understand the argument that segmenting the disk usage keeps one database from gobbling up all of the available free space unexpectedly, I don't see this a a common occurrence in the Exadata world. Certainly not as often as I see the other items above. We are talking about terabytes of space here, after all. Also, if the DBAs are going to take on the additional role of storage administrator on Exadata (as is usually the case), then it's time for us to be big boys (and girls), and own it. It's not like managing the storage on Exadata is very tricky. Once the storage is configured, there's very little to it. There is no need to bother with zoning fibrechannel switches, configure storage groups, or worry about which LUNs contain which disks. Yes, there are disk failures, but that's handled very well by ASM these days. If you're feeling frisky, you can play around with ASM's "Intelligent Data Placement" to create "hot" and "cold" areas of the diskgroup to better manage performance.

Just like many other aspects of being a DBA on Exadata, the storage layout is different from a traditional system. Based on the requirements and the architecture, administrators are being forced to think differently and look for new solutions. As I said above, if you disagree, please feel free to drop a comment and defend your position. As with most things Oracle, there isn't one right answer.

Pingback: Blog: Exadata Diskgroup Planning | Oracle | Syngu

Andy – For the majority of installations I would agree that RECO, DATA, and DBFS_DG would be acceptable and allow the greatest flexibility for future management. My question would be for a specific sector of businesses, the ones that don’t like to share SAN space, data, etc., in particular government bodies. In your opinion, how do you best handle issues where it isn’t so much technical as it is a political issue? It would be a problem seen in consolidation projects, for example three “organizations” within a government body want to share an Exadata system, but not data so storage can’t be co-mingled. Options could be ASM security, DB security, segregate storage servers associated to database machines, or just add specific disk groups for each database across all storage nodes (which is less “secure” but perhaps more manageable). I wonder which of those options still allows the greatest management ability, without compromising the security and political policies of such an organization.

— Bill

Andy, Good write up on Disk group planning. We recently acquired an Exadata 2-2 Quarter Rack and was under the same dilemma – will the default disk groups (data, reco and dbfs_dg) be sufficient especially if we’re going to consolidate ?

However, my concern was on the design of the grid disks. Some of the grid disks fall under the same failure group within the same cell. What that means is during a patch, we may have problems in terms of availability of data if the grid disk and the failure group is on the same cell.

My question though was can we create the grid disks such that their failure group would be on a separate cell and that an outage of a Storage cell (during patching ) would not result in a downtime for the database ?

Thanis

Vin

Vin,

The reason that each cell is a failgroup unto itself it to ensure that no 2 copies of data are placed on the same cell. If you had both copies of a block in a normal redundancy diskgroup on one cell and it went offline, the entire diskgroup would be offline until that cell came back.

If you’re planning on performing rolling cell patches, I would strongly advise to use high redundancy on your diskgroups, so that while you are degrading your mirror, the loss of a single disk during the process would not take you offline.

Hi Vin,

First of all in EXADATA – EXADATA make sure that no two disk should come under 1 failgroup. Every cell is having a failgroup – a different cell server.

During patch, you can either opt ROLLING or NON-ROLLING. ROLLING is perferable option due to the availability of the database, as you will apply patch on one cell at a time, as oppose to NON-ROLLING where all cells need to shutdown.

In case of un-availability of a cell – ASM will read the data from its mirror copy (another cell)

This discussion can go on further in depth in respect to PST (Partnership and Status Table)

Regards,

Sunil Bhola

I’m not a DBA but I would to understand how ASM’s “Intelligent Data Placement” can improve performance when the LUN are “virtualized” from the storage SAN?EVA from COMPAQ was the first but now all storage spread data around different spindles, so I don’t understand.

Andy, Nice article. From a management standpoint, having 1 DG for all databases works great…. allocate once all the space and forget.

However, we recently had an incident where our storage (not exadata) which has disks striped had issues with one of the disks and the diskgroup go offline. If this were to happen with a single DATA diskgroup instead of having one DG per database, would the model you discussed not be a HA issue?

Thanks